AI inference mini PCs are designed to deliver powerful, optimized performance at the edge, helping you handle real-time tasks efficiently. They balance processing power, energy use, and reliability, making them ideal for diverse environments. Hardware components like accelerators, cooling, and modular design play a vital role in durability and long-term operation. If you want to understand how these systems truly perform and adapt, keep exploring the key factors behind their deployment success.

Key Takeaways

- Hardware optimization is crucial for balancing inference performance, power efficiency, and thermal management in real-world AI mini PC deployments.

- Edge AI mini PCs act as autonomous data centers, reducing latency and bandwidth while demanding durable, low-power, and reliable hardware.

- Specialized accelerators like TPUs boost inference speed but require compatible hardware and software integration for optimal results.

- Modular and scalable designs enhance longevity, adaptability, and ease of maintenance in diverse deployment environments.

- Effective thermal management and component selection are essential for continuous operation and hardware longevity in edge settings.

As artificial intelligence applications become more integral to various industries, deploying AI inference efficiently requires compact, powerful hardware. Mini PCs designed for AI inference have gained popularity because they offer a portable, space-saving solution that still packs enough processing power for real-time tasks. These mini PCs are especially useful in edge computing scenarios where data needs to be processed locally rather than sent to distant data centers. By bringing computation closer to the source, you reduce latency, improve response times, and lessen the bandwidth load on networks. But to truly access their potential, you need to pay close attention to hardware optimization. It’s not just about choosing a small box with a GPU; it’s about configuring the hardware to maximize performance and energy efficiency for your specific deployment.

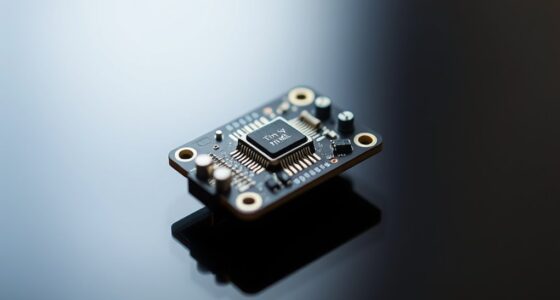

Edge computing, in this situation, becomes a key factor. When you deploy AI inference mini PCs at the edge, you’re essentially creating mini data centers that operate independently of cloud infrastructure. This setup demands hardware that’s tailored for durability, low power consumption, and high reliability in diverse environments. You want hardware optimized for continuous operation without overheating or degradation. Choosing the right processor, memory, and cooling solutions can make all the difference. For instance, integrating specialized AI accelerators or Tensor Processing Units (TPUs) directly into your mini PC can notably speed up inference tasks, assuring faster results and lower latency. Additionally, hardware that supports robust thermal management** is crucial for maintaining performance over time. Hardware optimization also involves balancing performance with energy use. You may have the latest GPU, but if it consumes too much power or generates excessive heat, it won’t be suitable for deployment in remote or constrained settings. Instead, you look for hardware that offers a good compromise: enough computational capability to handle your AI models while maintaining energy efficiency. Furthermore, selecting components with expandability options ensures your mini PC can adapt to evolving AI workloads and hardware advancements, supporting long-term deployment. Incorporating modular design principles can further facilitate upgrades and repairs, extending the lifespan of your hardware investments. Considering power efficiency during component selection can significantly impact the feasibility of long-term deployments in remote areas. Beyond just hardware, understanding software optimization** is equally vital to ensure peak performance and resource management. Additionally, selecting components with robust thermal management and expandability options ensures your mini PC remains reliable over time.

The truth is, not all AI mini PCs are created equal. Some are built with scalability and customization in mind, allowing you to fine-tune hardware for specific applications. Others might be more plug-and-play but limited in their ability to adapt as your needs evolve. When you’re considering deploying these systems in real-world scenarios, prioritize hardware that’s designed for edge computing environments. This means durable, optimized, and capable of handling the demands of continuous inference workloads. Ultimately, the key to successful AI inference mini PC deployment lies in thoughtful hardware optimization—guaranteeing your compact solution delivers maximum performance where it’s needed most.

AI inference mini PC with TPU accelerators

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Frequently Asked Questions

How Do AI Inference Mini PCS Compare to Cloud-Based Solutions?

AI inference mini PCs often outperform cloud-based solutions in edge computing by offering low latency and real-time processing. Their hardware optimization guarantees efficient performance without depending on internet connectivity, making them ideal for sensitive or remote deployments. While cloud solutions provide scalability, mini PCs excel in speed and data privacy, giving you immediate insights and control. This makes them a practical choice for many on-site AI applications.

What Are the Key Factors Affecting Mini PC Inference Performance?

You should consider several key factors affecting mini PC inference performance, especially in edge computing. Hardware optimization plays a crucial role, including selecting powerful processors, sufficient RAM, and specialized accelerators like GPUs or TPUs. Additionally, efficient cooling and power management ensure consistent performance. Focusing on these factors helps you maximize inference speed and reliability, making your mini PC well-suited for real-time AI applications in edge environments.

Which Industries Benefit Most From AI Inference Mini PCS?

You’ll find AI inference mini PCs especially valuable in industries like healthcare, manufacturing, and retail, where quick decision-making matters. Did you know that 70% of edge deployment relies on small, power-efficient devices? These mini PCs excel in such environments, offering efficient AI inference without draining power. Their compact size and energy efficiency make them perfect for real-time data processing, improving outcomes and reducing operational costs in these sectors.

How Secure Are Mini PCS for Sensitive AI Data?

Mini PCs can be quite secure for sensitive AI data if you implement proper cybersecurity measures. You need to address cybersecurity challenges by using strong data encryption and regular security updates. Keeping firmware up-to-date, enabling firewalls, and restricting physical access also help safeguard your data. While mini PCs are compact, they can effectively protect your AI data when combined with these security best practices, making them suitable for sensitive deployments.

What Is the Typical Lifespan of an AI Inference Mini PC?

While sleek design suggests longevity, AI inference mini PCs typically last around 3 to 5 years. Their hardware durability depends on quality components, but frequent updates and maintenance requirements can influence lifespan. You might find that high-end models endure longer with proper care, yet constant technological advancements can render them obsolete sooner. So, plan for regular upgrades and maintenance to maximize your mini PC’s operational life.

reComputer Super J4012 – Advanced Edge AI Computer with NVIDIA Jetson Orin NX 16GB

Supercharged AI Performance: Powered by NVIDIA Jetson Orin NX 16GB, delivers up to 157 TOPS in MAXN Super…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Conclusion

While some might worry that AI inference mini PCs aren’t powerful enough for real-world deployments, the truth is they’re more capable than ever. Modern mini PCs pack impressive processing power, optimized for efficiency and reliability. You don’t need massive servers to run sophisticated AI tasks — these compact devices deliver, saving space and cost. So, don’t let size fool you; they’re ready to handle your deployment needs without compromise.

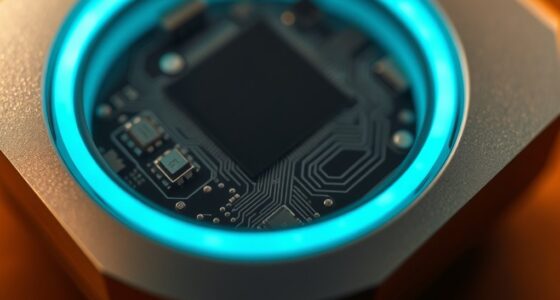

modular AI inference mini PC

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

GEEKOM GT1 Mega AI Mini PC, with Intel 14th Gen Core Ultra 9 185H | 32GB DDR5 1TB NVMe SSD | Intel Arc Graphics | Dual 2.5G LAN & WiFi 7 | 8K Quad Display | Windows 11 Pro | 3-Year Warranty | SD Solt

🚨 𝗜𝗻𝗱𝘂𝘀𝘁𝗿𝘆 𝗦𝘂𝗽𝗽𝗹𝘆 𝗔𝗹𝗲𝗿𝘁 – AI-driven DDR memory shortages have led to significant cost fluctuations.To keep the GEEKOM…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.