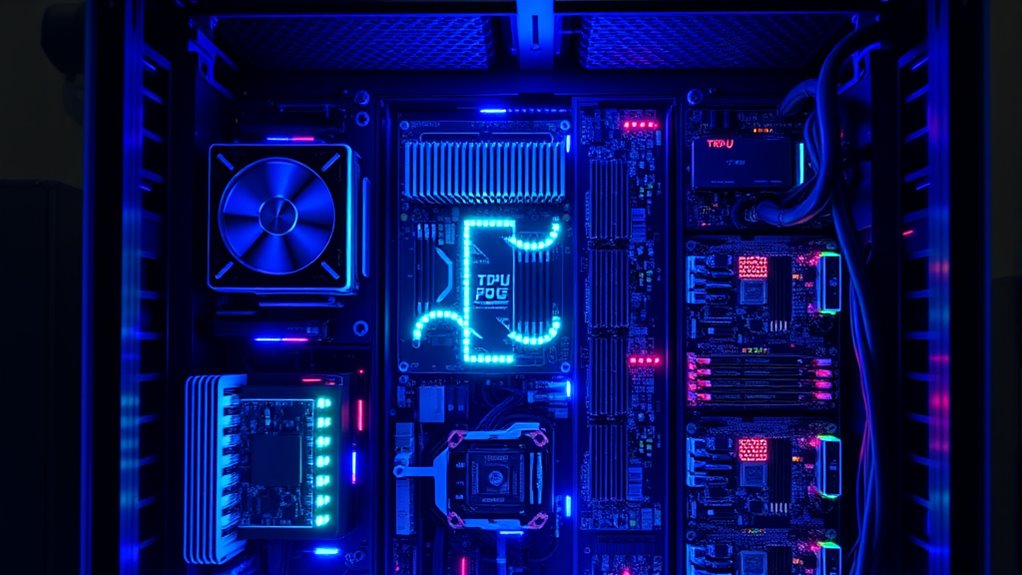

Heterogeneous hardware like GPUs, TPUs, and FPGAs plays a key role in optimizing your AI workloads for better speed, efficiency, and scaling. GPUs are great for parallel processing, perfect for training large models, while TPUs excel at tensor operations, speeding up inference. FPGAs offer customizable solutions tailored to specific tasks. Combining these hardware types helps you get more from your AI systems, making them faster and more power-efficient. If you keep exploring, you’ll discover how this mix reveal even greater potential.

Key Takeaways

- Heterogeneous hardware like GPUs, TPUs, and FPGAs optimizes different AI workload types for enhanced performance and efficiency.

- GPUs excel at parallel processing for training large models, while TPUs are tailored for rapid tensor computations in inference.

- FPGAs offer customizable hardware setups, enabling tailored acceleration for specific AI tasks and workflows.

- Combining these hardware types balances power consumption, speed, and scalability for diverse AI applications.

- Storage solutions complement hardware by managing large datasets efficiently, reducing bottlenecks in AI workloads.

As AI workloads become increasingly complex and demanding, leveraging heterogeneous hardware has emerged as a vital strategy to boost performance and efficiency. You’ll find that different hardware types—GPUs, TPUs, and FPGAs—each bring unique strengths to the table, enabling you to optimize your AI systems for speed, power consumption, and scalability. Instead of relying solely on traditional CPUs, integrating these specialized accelerators allows you to tailor your infrastructure to meet specific workload requirements, making your AI development more agile and cost-effective. Utilizing vertical storage solutions can also help manage large datasets more effectively, further enhancing your system’s overall performance.

AI Systems Performance Engineering: Optimizing Model Training and Inference Workloads with GPUs, CUDA, and PyTorch

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Frequently Asked Questions

How Do AI Workloads Differ Across Various Hardware Types?

You’ll notice AI workloads differ across hardware types because each offers unique advantages. GPUs excel at parallel processing, making them ideal for training deep neural networks quickly. TPUs are optimized for tensor operations, boosting performance in machine learning tasks. FPGAs provide customizable hardware acceleration, perfect for specialized or low-latency applications. By choosing the right hardware, you can improve efficiency, reduce costs, and better meet your specific AI workload demands.

What Are the Cost Implications of Using Heterogeneous Hardware for AI?

Using heterogeneous hardware for AI is like juggling flaming torches—you save costs but risk burning yourself if you’re not careful. While GPUs, TPUs, and FPGAs can reduce energy and maintenance expenses, they often require significant upfront investment and specialized skills. You might also encounter higher complexity in management and integration. Weigh these costs against performance gains to decide if the trade-off is worth your resources.

How Does Energy Efficiency Vary Among GPUS, TPUS, and FPGAS?

You’ll find that FPGAs generally offer the best energy efficiency because they can be customized for specific tasks, reducing power consumption. TPUs excel with machine learning workloads, providing high performance at lower energy costs. GPUs, while versatile, tend to consume more energy due to their design for broad tasks. Your choice depends on balancing performance needs with energy savings, but FPGAs often lead in efficiency.

What Are the Best Practices for Optimizing AI Models for Different Hardware?

To optimize AI models for different hardware, start by profiling your model to identify bottlenecks. Then, tailor your code to leverage each device’s strengths—use parallel processing on GPUs, specialized operations on TPUs, and customizable logic on FPGAs. You should also experiment with quantization and pruning to reduce complexity, and make sure to utilize hardware-specific libraries and frameworks for maximum efficiency. Regularly test and refine your approach for best results.

How Does Hardware Choice Impact AI Deployment Latency and Throughput?

Choosing the right hardware is like picking the perfect race car; it directly speeds up your AI deployment. Your hardware impacts latency and throughput by determining how fast data moves and how many tasks it handles simultaneously. GPUs excel at parallel processing, reducing latency, while FPGAs can be tailored for specific tasks to boost throughput. Selecting the right hardware guarantees your AI runs efficiently, meeting both speed and volume demands.

The Ultimate TENSORFLOW Cookbook: Accelerated Training and Inference on GPUs, TPUs, and Edge Devices (Mastering Deep Learning & Machine learning)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Conclusion

As you explore AI workloads across GPUs, TPUs, and FPGAs, you realize that each hardware type often converges in unexpected ways, revealing hidden efficiencies. It’s fascinating how the rapid evolution of these technologies coincides with your growing understanding, making challenges feel like opportunities. Ultimately, embracing this convergence allows you to optimize performance and innovation, turning what seems like disparate tools into a seamless ecosystem—proving that sometimes, the right hardware combination just clicks when you least expect it.

Digilent Basys 3 Artix-7 FPGA Trainer Board: Recommended for Introductory Users

Designed for students and beginners looking to understand Digital Logic, fundamentals of FPGAs

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Towards Heterogeneous Multi-core Systems-on-Chip for Edge Machine Learning: Journey from Single-core Acceleration to Multi-core Heterogeneous Systems

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.